Understanding Google’s Search Algorithm: How Does It Rank Pages?

1. Decoding Google's Digital Librarian

The Google Search algorithm stands as one of the most complex and influential technological systems in the modern world, acting as the primary gateway to information for billions of users daily. It is not a singular, monolithic entity but rather a sophisticated ecosystem comprising multiple algorithms, machine learning models, and data processing techniques.1 These components work in concert to crawl the vast expanse of the internet, index its content, and rank billions of web pages in response to user queries, all within fractions of a second.7 The algorithm's operation is characterized by its dynamic nature; Google implements thousands of changes each year, ranging from subtle adjustments to significant "core updates" that can reshape search engine results pages (SERPs).8 This constant evolution reflects Google's relentless pursuit of improving the quality and relevance of search results, adapting to the ever-changing landscape of web content and user search behaviors.5

Google's Core Mission: Organizing the World's Information

The foundational principle guiding the development and refinement of Google's search algorithm is the company's official mission statement: "to organize the world's information and make it universally accessible and useful".14 This mission translates directly into the algorithm's primary objectives. Key tenets derived from this mission dictate that Search should deliver the most relevant and reliable information available, maximize access to a diverse range of sources, present information in the most useful formats (text, images, maps, etc.), protect user privacy through robust controls and security, and fund these operations via clearly labeled advertising without selling personal information or allowing advertisers to pay for better organic rankings.14

The algorithm, therefore, is the technological embodiment of this mission. It is the mechanism through which Google attempts to sift through the immense volume of information on the web—an index covering hundreds of billions of pages and exceeding 100 million gigabytes 24—to find and present the most helpful and trustworthy answers to user queries.7 The emphasis on relevance, reliability, usability, and accessibility within the ranking factors directly mirrors the core components of Google's mission statement.14 Understanding this mission provides a crucial framework for comprehending why Google prioritizes certain ranking signals and continually updates its systems.

A Brief History: From PageRank to AI Dominance

Google's journey began in 1996 at Stanford University with a research project called BackRub, initiated by graduate students Larry Page and Sergey Brin.29 The cornerstone of BackRub, and later Google Search, was the PageRank algorithm.31 PageRank introduced a revolutionary concept: determining the importance of a webpage by analyzing the quantity and quality of links pointing to it from other pages. The underlying assumption was that more important websites would naturally attract more links from other significant sites, akin to citations in academic literature.31 This link-based analysis was a key differentiator that propelled Google ahead of its early competitors.1 The PageRank patent itself is owned by Stanford University.33

While PageRank was foundational, the Google algorithm has evolved dramatically since its inception. Early updates, such as "Boston" and "Florida" in 2003, marked the beginning of Google's efforts to refine results and combat manipulative Search Engine Optimization (SEO) tactics that emerged in response to PageRank.29 Today, while PageRank remains a part of the ranking system 2, it is just one among hundreds of signals.36 The modern Google algorithm is a vastly more complex engine, heavily reliant on artificial intelligence (AI) and machine learning (ML).5 Key milestones like the Hummingbird update (focusing on semantic understanding), RankBrain (using ML for query interpretation), BERT (understanding language context), and the rise of AI Overviews signify this profound shift.9 This evolution was necessitated by the exponential growth of the web, the increasing complexity of user queries, and the constant need to understand content quality and user intent far beyond simple link counts.24

2. The Engine Room: How Google Finds and Organizes Content

Before Google can rank any webpage, it must first discover and understand its content. This involves a multi-stage process encompassing crawling, indexing, and finally, serving results.

Crawling the Web: Discovering the Digital Universe

Crawling is the fundamental discovery process where Google employs automated programs, known ubiquitously as Googlebot (or spiders, robots, crawlers), to systematically navigate the web and find new or updated content.5 This content can range from entire webpages to individual text segments, images, videos, PDFs, and more.53

Google discovers URLs through several primary methods. The most common is by following hyperlinks from pages it already knows about.21 When Googlebot crawls a known page, it extracts any links present and adds new or updated URLs to its list for future crawling. Another method is through XML sitemaps, which are files created by website owners listing the URLs they want Google to discover and crawl.21 Website owners can also submit individual URLs or sitemaps directly via Google Search Console.54

Googlebot itself operates through an algorithmic process, determining which sites to crawl, how frequently, and how many pages to fetch from each site.21 There are different versions of Googlebot, primarily Googlebot Smartphone and Googlebot Desktop, identifiable by their user-agent strings in HTTP requests.58 Reflecting the prevalence of mobile usage, Google primarily uses the mobile crawler (mobile-first indexing).6 To prevent overwhelming websites, Googlebot is programmed to limit its crawl rate, adjusting its speed based on the site's responsiveness (e.g., slowing down if it encounters server errors like HTTP 500s).21 The amount of resources Google allocates to crawling a specific site is often referred to as the "crawl budget," influenced by factors like crawl rate limits and crawl demand (how often content is updated or deemed important).53 Googlebot typically crawls the first 15MB of an HTML or supported text-based file.58

A critical aspect of modern crawling is rendering. Because many websites rely on JavaScript to load content dynamically, simply fetching the initial HTML is insufficient. Googlebot uses a rendering service (Web Rendering Service or WRS) based on a recent version of Chrome to execute JavaScript and render the page much like a user's browser does.21 This ensures Google can "see" content that isn't present in the initial HTML source. This rendering process involves fetching additional resources like CSS and JavaScript files, which also consumes crawl budget.55 The WRS caches these resources for up to 30 days to improve efficiency.55

However, crawling is not always successful. Common issues that prevent Googlebot from accessing or understanding content include server errors, network problems, robots.txt directives explicitly blocking the crawler, pages requiring logins, complex site architectures hindering navigation, or JavaScript errors preventing proper rendering.21 Webmasters can facilitate crawling by submitting sitemaps, ensuring their robots.txt file isn't overly restrictive, maintaining good site speed and server health, implementing logical internal linking, and fixing crawl errors reported in Search Console.53 The ability for Google to successfully crawl and render a page is a fundamental prerequisite for it to be considered for indexing and ranking.

Indexing the Information: Building the Knowledge Base

Once a page has been successfully crawled and rendered, Google attempts to understand its content and store relevant information in the Google Index, often referred to as "Caffeine".5 This index is an enormous, distributed database hosted across thousands of computers, containing information on hundreds of billions of webpages and exceeding 100,000,000 gigabytes in size.24 Recent testimony suggests the index holds approximately 400 billion documents, though this number fluctuates.25 The index functions much like the index at the back of a book, creating entries for words and concepts and noting the pages where they appear.24

The indexing process involves analyzing various elements of the page:

- Textual Content: Analyzing the words, phrases, and overall topics discussed.

- Key HTML Tags: Paying attention to content within tags like <title>, heading tags (<h1>, <h2>, etc.), and meta descriptions (though descriptions aren't a direct ranking factor, they influence snippets and click-through rates).21

- Attributes: Analyzing attributes like image alt text.21

- Media: Cataloging and analyzing images and videos.21

- Structure & Metadata: Understanding the page's structure and any embedded metadata.69

During indexing, Google also performs critical analysis regarding duplication and canonicalization.21 It determines if a page is a duplicate or near-duplicate of another page already known to Google. If duplicates are found, Google identifies the canonical version – the representative URL that should be shown in search results.21 This prevents multiple versions of the same content from competing against each other and consolidates ranking signals (like links) to the preferred URL.

Crucially, indexing is not guaranteed for every page Google crawls.21 Google actively filters content during this stage. Pages may not be indexed if:

- The content quality is deemed low.21

- Robots meta tags (noindex) or HTTP headers (X-Robots-Tag: noindex) instruct Google not to index the page.21

- The website's design or technical setup makes indexing difficult.21

- The content is considered spam or violates Google's policies.25

Essentially, indexing acts as a significant quality filter. Google discovers trillions of pages but only indexes a fraction – the hundreds of billions it deems potentially valuable, accessible, and non-duplicative.24 Tools like Google Search Console provide reports (e.g., Page Indexing report) and the URL Inspection tool allows webmasters to check the indexing status of specific pages.54

Serving & Ranking: Delivering Relevant Results

The final stage occurs when a user submits a query. Google's systems search the massive index for pages that match the query's intent and context.19 The core task is then to rank these potentially relevant pages and present them to the user in an ordered list on the Search Engine Results Page (SERP), aiming to surface the highest quality and most useful information first.19

This ranking process is incredibly complex, relying on hundreds of different factors or "signals".4 The weight or importance assigned to each signal is not fixed; it varies dynamically depending on the nature of the user's query.20 For example, for a query like "latest news on earthquake," the freshness of the content might be weighted very heavily. For a query about medical advice, signals related to expertise, authoritativeness, and trustworthiness (E-E-A-T) will likely receive greater weight.38 For a search like "restaurants near me," location proximity becomes paramount.20

The key categories of signals that Google's algorithms evaluate include (and will be explored in detail in the next section):

- Meaning: Understanding the intent behind the query's words.

- Relevance: How well the content on a page matches the query's intent.

- Quality: Assessing the expertise, authoritativeness, trustworthiness, and overall helpfulness of the content.

- Usability: Evaluating the page experience, including speed, mobile-friendliness, and security.

- Context: Considering the user's location, search history, and settings for personalization.20

Given the billions of searches processed daily and the vastness of the index, this entire ranking process is fully automated.7 Google continually refines these automated systems through rigorous testing, including live traffic experiments and evaluations by human Search Quality Raters.7 It's also crucial to reiterate that placement in Google's organic search results cannot be purchased; advertising systems are entirely separate and clearly labeled.14 The dynamic and contextual nature of ranking means that achieving high visibility requires a holistic approach, understanding that the importance of different factors shifts based on the specific search scenario.

3. The Anatomy of Ranking: Key Signal Categories

Google's ranking algorithms evaluate a multitude of signals to determine the order of search results. While the exact weighting is proprietary and dynamic, these signals can be broadly categorized into factors related to content, links, user experience, and technical setup.

Content is King: Relevance, Quality, and E-E-A-T

Content forms the core of what users search for, and Google's algorithms place immense importance on its attributes.20

- Query Relevance and Semantic Matching: Google has moved far beyond simply matching keywords in a query to keywords on a page.44 The focus now is on understanding the meaning (semantics) and intent behind the search query.5 Advanced AI systems, including Natural Language Processing (NLP), BERT, and Neural Matching, analyze synonyms, related concepts, and the contextual relationships between words to grasp what the user is truly looking for.1 While keywords still serve as a basic relevance signal, especially when appearing in important page elements like titles, headings (H1, H2, etc.), body text, and URLs 11, the algorithms look for deeper conceptual alignment. Systems like passage ranking can even identify and rank specific sections within a longer page if that passage is highly relevant to a niche query.1

- Content Quality, Depth, and Originality: Identifying relevant content is only the first step; Google's systems then aim to prioritize what seems most helpful and reliable.20 High-quality content is typically comprehensive, insightful, accurate, clearly written, and provides substantial value beyond just summarizing other sources.79 The principles of the Helpful Content System, now integrated into core ranking, emphasize rewarding content created for human users that provides a satisfying experience and demonstrates depth of knowledge.2 Content that covers a topic thoroughly ("depth") tends to perform better than superficial treatments 2, although there's no magic word count.28 Originality is also highly valued; Google aims to surface original reporting, research, and analysis ahead of content that merely aggregates or duplicates information from elsewhere.1 Duplicate content can lead to pages being ignored or penalized, making canonicalization strategies essential.6 Conversely, content created primarily to attract search engines, using extensive automation without adding value, or lacking real expertise, is likely to be demoted.28

- Freshness Signals: For certain types of queries, particularly those related to current events, trending topics, or information that changes regularly, Google's "query deserves freshness" (QDF) systems prioritize more recently published or updated content.2 Significant updates to existing content can also signal freshness and relevance to Google's algorithms.11 The date of the last update might even be displayed in search results.80

- E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness): This framework is central to Google's assessment of content quality, particularly for sensitive "Your Money or Your Life" (YMYL) topics like health, finance, and safety.6

- Experience: Added in December 2022 105, this dimension assesses whether the content creator demonstrates practical, first-hand experience with the topic (e.g., actually using a product, visiting a place, navigating a process). This addition is widely seen as a response to the proliferation of AI-generated content, emphasizing the value of authentic human insight.12

- Expertise: Refers to the creator possessing the necessary skills and knowledge in the subject area. This is particularly crucial for complex or YMYL topics where accuracy is paramount.96

- Authoritativeness: Relates to the reputation and recognition of the creator or the website as a go-to source for the topic. Signals include high-quality backlinks from other relevant, authoritative sites, mentions, and overall brand recognition.97

- Trustworthiness: Considered the most critical element 96, trust encompasses the accuracy, honesty, safety, and reliability of the content and the website. Signals include clear sourcing, secure connections (HTTPS), transparent author information, easily accessible contact details, and positive reputation/reviews.96

- Evaluation: While E-E-A-T isn't a direct ranking factor or score 75, Google's algorithms identify signals that correlate with these attributes to prioritize helpful and reliable content.20 Google's human Quality Raters explicitly use the E-E-A-T framework in their guidelines to evaluate search result quality, providing crucial feedback for algorithm refinement.7 For YMYL queries, Google explicitly gives more weight to signals related to authoritativeness, expertise, and trustworthiness.38 Demonstrating E-E-A-T involves showcasing author credentials, providing clear evidence and sourcing, building a positive reputation, ensuring site security, and focusing on accuracy.85 Google encourages creators to use the "Who, How, and Why" framework to self-assess their content's alignment with E-E-A-T principles.96

The Power of Links: Authority and Trust

Backlinks (links from external websites to your own) were the cornerstone of Google's original PageRank algorithm and remain a significant factor in determining a page's authority and relevance.1

- Beyond PageRank: Analyzing Backlink Quality, Quantity, and Relevance: While the fundamental idea of links as "votes" or endorsements persists 1, Google's analysis has become far more sophisticated. The emphasis has shifted decisively from link quantity to link quality.1 A handful of editorially placed links from highly relevant and authoritative websites carry significantly more weight than numerous links from low-quality or unrelated sources.35 Key quality indicators include:

- Relevance: Links from sites within the same niche or discussing topically related content are more valuable.34 The context surrounding the link on the source page is also analyzed.34

- Authority: Links from domains perceived as authoritative and trustworthy (e.g., established industry sites, news organizations, educational institutions) pass more "authority" or "link equity".35 While third-party metrics like Domain Authority exist, Google uses its own internal signals, including PageRank.2

- Placement: Links embedded within the main body content are generally viewed as more editorially significant than links placed in footers, sidebars, or boilerplate templates.34

- Diversity: A natural, healthy backlink profile typically consists of links from a variety of different domains and types of sites.37

- The Role of Anchor Text: Anchor text, the visible, clickable text of a hyperlink, serves as a contextual signal to Google about the topic of the linked page.128 Using relevant and descriptive anchor text can be beneficial.37 However, a history of manipulation through exact-match keyword anchor text means that Google's algorithms are highly sensitive to over-optimization.61 Excessive use of keyword-rich anchors is a strong spam signal. A natural anchor text profile includes a mix of branded terms, naked URLs, generic phrases ("click here"), partial matches, and some exact matches.37 Google also analyzes the text surrounding the anchor for additional context.34

- Combating Link Spam: Google has dedicated significant resources to identifying and neutralizing manipulative link practices. The Penguin update, first launched in 2012 and now part of the core algorithm, specifically targeted tactics like link schemes, paid links, low-quality directory submissions, and unnatural anchor text.50 More recently, AI-powered systems like SpamBrain actively identify sites involved in buying or selling links for ranking purposes, as well as sites created solely to pass links.143 Google's policy explicitly prohibits links intended primarily for artificial manipulation.145 Rather than solely penalizing sites, recent link spam updates often focus on neutralizing the value of spammy links, meaning any ranking benefit they might have provided is simply lost.143 Attributes like rel="nofollow", rel="sponsored", and rel="ugc" provide hints to Google about the nature of links, though Google might still use them for discovery.34 The Disavow tool allows webmasters to ask Google to ignore specific incoming links they believe are harmful or were acquired unnaturally.34 The clear message is that earning high-quality, relevant links editorially through valuable content is the only sustainable approach.130

User Experience Matters: Making Pages Delightful

Google increasingly emphasizes the importance of user experience (UX) on a webpage, recognizing that even relevant content is unhelpful if the page is slow, difficult to use, or insecure.4

- Page Experience Signal: Google introduced "Page Experience" as a concept encompassing several signals that measure how users perceive their interaction with a page.1 While there isn't one single Page Experience score used for ranking 26, its components are evaluated by core ranking systems. Providing a good overall page experience aligns with what Google seeks to reward.26 Key components include:

- Page Speed and Performance: How quickly a page loads is a critical UX factor and a confirmed ranking signal.63 Slow pages lead to higher bounce rates and user frustration.62 Google provides tools like PageSpeed Insights and Lighthouse for measurement and diagnosis.27 Optimizations involve image compression, code minification, server response improvements, and caching.62

- Mobile-Friendliness and Mobile-First Indexing: With the majority of searches occurring on mobile devices 63, Google primarily uses the mobile version of a site for indexing and ranking (Mobile-First Indexing).4 A site must be responsive and provide a good experience on smaller screens, with readable text and appropriately sized touch targets, to rank well in mobile results.63 Google offers a Mobile-Friendly Test and usability reports in Search Console.64

- Core Web Vitals (LCP, INP, CLS): These are specific, user-centric metrics designed to quantify key aspects of page experience:

- Largest Contentful Paint (LCP): Measures loading performance – how quickly the largest content element becomes visible. Aim for under 2.5 seconds.1

- Interaction to Next Paint (INP): Measures responsiveness – how quickly the page responds to user interactions (clicks, taps, key presses). Replaced First Input Delay (FID) in March 2024.153 Aim for under 200 milliseconds.153

- Cumulative Layout Shift (CLS): Measures visual stability – how much unexpected layout shifts occur during loading. Aim for a score below 0.1.1 Core Web Vitals are confirmed ranking signals used within the Page Experience evaluation.6 They are measured using both real-user data (Field Data via Chrome User Experience Report - CrUX) and controlled environment testing (Lab Data via tools like Lighthouse).66

- Site Security (HTTPS): Using HTTPS (via an SSL/TLS certificate) encrypts the connection between the user's browser and the website, enhancing security and user trust.27 Google confirmed HTTPS as a lightweight ranking signal in 2014 and encourages its adoption.27 It's a fundamental component of page experience and E-E-A-T.26

- Intrusive Interstitials and Page Layout: Google penalizes pages that display intrusive popups or interstitials that cover the main content and hinder accessibility, particularly on mobile devices.1 This penalty was introduced in 2017.64 Exceptions exist for legally required notices (cookies, age gates), login dialogs, and reasonably sized, easily dismissible banners.64 Similarly, pages overloaded with ads, especially above the fold, can negatively impact UX and rankings.1

Collectively, these user experience factors demonstrate Google's commitment to ranking pages that are not just relevant but also accessible, fast, secure, and user-friendly. A poor experience can significantly detract from even the highest quality content, hindering its ability to rank effectively.

Technical Foundations: Ensuring Accessibility

Technical SEO refers to optimizing the underlying infrastructure of a website to ensure search engines can effectively find, crawl, understand, and index its content.70 It forms the essential foundation for all other SEO efforts.

- Site Architecture and Navigation: A logical and intuitive site structure is crucial for both users and search engine crawlers.5 Good architecture ensures that crawlers can easily discover all important pages and understand the relationships between them, facilitating efficient crawling and indexing.72 Best practices include using clear primary navigation menus, organizing content into logical categories and subcategories (directories), implementing breadcrumb navigation for context, and ensuring strong internal linking between related pages.37 A "flat" architecture, where important content is reachable within a few clicks from the homepage, is generally preferred.72 The URL structure should also be logical, descriptive, and concise, using keywords appropriately and hyphens for word separation.11

- Crawlability and Indexability (Robots.txt, Sitemaps, Meta Tags): Webmasters need to provide clear instructions to search engines about how to handle their site's content.

- robots.txt: This file tells crawlers which parts of a site they should not crawl.57 It's useful for blocking unimportant resources or duplicate content areas but should not be used to prevent indexing (that's the role of noindex).60 Misconfiguration can accidentally block important content.57

- XML Sitemaps: These files provide a list of important URLs for Google to crawl and index, helping with discovery and prioritization, especially for large sites or new content.21

- Indexability Controls: The noindex directive (implemented via meta tag or X-Robots-Tag HTTP header) explicitly tells Google not to include a page in its index.58 Canonical tags (rel="canonical") are vital for managing duplicate content, telling Google which version of a page is preferred and should receive consolidated ranking signals.21

- Crawl Budget Management: For very large websites, understanding and managing crawl budget (the resources Google allocates to crawling the site) is important. Factors like site speed, server health, and frequency of updates influence this.53

- Structured Data and Schema Markup: Implementing structured data using vocabularies like Schema.org provides explicit information about the content's meaning and context to search engines.68 This helps Google understand entities, relationships, and specific details (like recipe ingredients, event dates, product prices, or FAQ answers).179 The primary benefit is eligibility for rich results (enhanced visual displays in SERPs like star ratings, images, Q&A dropdowns), which can significantly improve visibility and click-through rates.177 While not a strong direct ranking factor itself 37, structured data is crucial for appearing in these enhanced features and aids Google's broader understanding of content, potentially influencing Knowledge Graph entries and AI-driven features.37 Implementation typically involves adding JSON-LD code (preferred by Google) to the page's HTML, validating it with tools like the Rich Results Test, and adhering to Google's guidelines.178

Without a solid technical foundation ensuring crawlability, indexability, usability, and understandability, even the most exceptional content and authoritative backlinks may fail to achieve their ranking potential. Technical SEO is not merely about fixing errors; it's about creating an environment where search engines can efficiently access and interpret a website's value.

4. Understanding the Searcher: Intent and Context

Beyond analyzing the content and structure of webpages, Google's algorithms invest heavily in understanding the user – specifically, their intent and the context surrounding their search.

Decoding Searcher Intent: Informational, Navigational, Transactional, Commercial

Search intent refers to the fundamental purpose or goal behind a user's query.5 Recognizing this intent is paramount because Google's primary objective is to provide results that directly satisfy the user's need.6 Mismatched intent leads to poor user experience and consequently, lower rankings.13 Search intent is commonly categorized into four main types:

- Informational ("Know"): The user is seeking information, answers, or wants to learn about a topic. These queries often use question words ("what," "how," "why") or general terms.75 Examples include "what is climate change?" or "how to tie a tie." Google typically serves blog posts, articles, guides, definitions, or featured snippets for these queries.184 This intent often represents the top of the marketing funnel.184

- Navigational ("Go"): The user wants to reach a specific website, brand, or page they already have in mind.75 Examples include "YouTube," "Amazon login," or "Moz blog." For well-known entities, the target site should naturally rank first.184

- Transactional ("Do"): The user intends to perform an action, most commonly making a purchase, but also potentially downloading something, signing up, or finding a physical location to visit.75 These queries often include words like "buy," "order," "download," "coupon," "near me," or specific product names.138 This represents the bottom of the funnel, indicating high purchase intent.184 Google typically serves product pages, category pages, service pages, or local map results.138 Shopping ads are frequently triggered.184

- Commercial Investigation ("Investigate"): The user is in the research phase before a potential transaction. They are comparing products, services, or brands, looking for reviews, or seeking the "best" option.75 Examples include "best smartphones 2025," "Salesforce vs HubSpot," or "RankBrain reviews." This intent falls in the middle of the funnel.185 Google often ranks comparison articles, review sites, detailed guides, and listicles.138

Google's algorithms use various signals, including the query wording (keyword modifiers) and the types of results users typically click on for similar queries (learned through machine learning systems like RankBrain), to determine the dominant intent.86 Some queries can have mixed intent, where Google might show a variety of result types to cater to different possibilities.184 For SEO professionals and content creators, identifying the primary intent for target keywords—often by analyzing the current SERP 186—and creating content that explicitly matches that intent is a non-negotiable aspect of modern optimization.138

The Role of Context: Personalizing the SERPs

Beyond understanding the general intent of a query, Google further refines results by considering the specific context of the user at the time of the search.20 This personalization aims to make results more immediately relevant and useful.194 Key contextual factors include:

- Location: A user's physical location is a powerful contextual signal, especially for queries with explicit or implicit local intent.14 Searching for "coffee shop" will yield different results in London versus Tokyo. Proximity is a dominant factor in local SEO rankings.1 Even non-local queries can be influenced; "football" yields different sports results based on location.20

- Search History: Google may use a user's past search queries and interactions with results to tailor current SERPs.20 If a user frequently searches for information related to a specific entity (e.g., "Apple" the company), future ambiguous searches for "apple" are more likely to show results related to the company.196 Pages visited multiple times previously might be ranked higher for that user.20 Broader Web & App Activity across Google services can also inform personalization.22

- Device Type: Results can be adapted based on whether the search is performed on a desktop, mobile, or tablet.19 Mobile-friendliness is a stronger factor for mobile rankings.149

- Query Phrasing and Language: The specific words used, the sentence structure, and the language of the query provide crucial context that NLP models like BERT analyze to understand subtle differences in meaning.5 The language setting also determines the primary language of the results shown.20

- Time and Current Events: For queries related to breaking news, ongoing events, or time-sensitive information (like sports scores or stock prices), algorithms prioritize the most recent and up-to-date content.20

- User Settings: Explicit user preferences, such as language settings, SafeSearch filters, or followed topics in Google Discover, are used to customize the experience.20

Google emphasizes that while personalization aims to match user interests, it is not designed to infer sensitive characteristics like race, religion, or political affiliation.20 Users are provided with controls through their Google Account (Activity Controls, My Ad Center, Privacy Checkup, Incognito Mode) to manage their data, adjust personalization settings, or turn off personalization features.14

The implication for SEO is that rankings are not absolute but relative to the context of the searcher. A website might rank differently for the same keyword depending on the user's location, device, or past behavior. This underscores the importance of understanding the target audience's typical context when developing content and SEO strategies.

5. Milestones in Evolution: Significant Algorithm Updates

Google's search algorithm is in a state of perpetual refinement, with thousands of minor adjustments made annually.8 However, several major, often named, updates have marked significant shifts in how Google assesses websites and ranks content. While many systems introduced by these updates (like Panda and Penguin) are now integrated into the core algorithm 2, understanding their original purpose provides insight into Google's long-term priorities.

- Panda (Launched Feb 2011): Targeting Content Quality

The Panda update was Google's first major offensive against low-quality content.48 It specifically targeted "content farms" churning out large volumes of superficial articles, sites with "thin" or shallow content, pages with excessive advertising relative to content, and sites relying heavily on scraped or duplicate material.48 Its purpose was to algorithmically identify and reduce the rankings of such low-value sites, while rewarding those producing original, high-quality, and useful content.49 Panda introduced a site-wide quality assessment, meaning poor content in one section could negatively impact the entire domain's visibility.49 Its initial rollout affected nearly 12% of English search queries 48, causing significant disruption and forcing a major shift in the SEO industry towards prioritizing content quality and user value, aligning with E-E-A-T principles.49 Panda eventually became part of Google's core ranking systems.2 - Penguin (Launched April 2012): Combating Link Spam

Following Panda's focus on content, the Penguin update targeted manipulative link-building practices used to artificially inflate PageRank and search rankings.50 It aimed to devalue or penalize sites engaging in link schemes, such as buying or selling links that pass PageRank, participating in excessive link exchanges, using private blog networks (PBNs), obtaining links from low-quality directories or bookmark sites, and employing over-optimized or spammy anchor text.50 Penguin's goal was to ensure that rankings were influenced by natural, high-quality, editorially earned links rather than artificial ones.51 The initial impact affected over 3% of English queries.50 Penguin underwent several iterations before Penguin 4.0 (Sept 2016) integrated it into the core algorithm, making its operation real-time and more granular.2 This real-time nature meant sites could recover faster after cleaning up bad links (often using the Disavow tool 51), and the algorithm shifted towards devaluing spammy links rather than solely penalizing the entire site.142 Penguin fundamentally changed link building, emphasizing quality and relevance over quantity.51 - Hummingbird (Launched Aug 2013): Embracing Semantic Search

Hummingbird represented a fundamental overhaul of Google's core search algorithm, described as the biggest change since 2001.88 Its primary purpose was to move Google beyond keyword matching towards a deeper understanding of the meaning and intent behind search queries, particularly longer, more conversational ones.6 It focused on "semantic search," analyzing the relationships between concepts (entities) rather than just individual words, leveraging technologies like the Knowledge Graph.44 Hummingbird aimed to parse the entire query to understand context and nuance, allowing it to handle natural language more effectively.88 Affecting an estimated 90% of searches globally 44, Hummingbird significantly improved Google's ability to provide relevant answers to complex questions and laid the groundwork for advancements in voice search and AI-driven query interpretation like RankBrain.44 It solidified the shift in SEO towards understanding user intent and creating topically relevant content.88 - RankBrain (Announced Oct 2015): The Dawn of Machine Learning in Ranking

RankBrain was Google's first major foray into using machine learning directly within its core ranking algorithm.39 As part of the Hummingbird system, its specific function was to help Google interpret ambiguous search queries, especially the 15% of daily queries that Google had never encountered before.39 RankBrain uses mathematical vectors to represent words and concepts, allowing it to identify patterns and relationships between seemingly unrelated queries and infer the user's likely intent even without exact keyword matches.39 It considers context like location and personalization and learns over time by observing user interactions with search results (e.g., click-through rates, dwell time) to gauge satisfaction and refine its interpretations.5 Shortly after its launch, Google stated RankBrain had become the third most important ranking signal 39, underscoring the pivotal role of AI in understanding complex user needs and further pushing SEO towards intent optimization.41 - BERT (Rolled out Oct 2019): Understanding Conversational Nuance

BERT (Bidirectional Encoder Representations from Transformers) marked another significant leap in Google's Natural Language Processing (NLP) capabilities.45 Unlike previous models that processed words sequentially, BERT's Transformer architecture allows it to process the entire sequence of words in a query at once, considering the full context of a word by looking at the words that come before and after it (bidirectional).45 This enables a much deeper understanding of nuance, particularly the role of prepositions (like "to" or "for") and word order in determining the meaning of longer, conversational queries.1 BERT was applied to both search query understanding and the generation of featured snippets.207 Impacting around 10% of English queries initially 45, BERT reinforced the need for clear, naturally written content that directly addresses user questions, as Google became better equipped to understand precisely what users were asking.45 - Helpful Content Update (System) (Launched Aug 2022): Prioritizing People-First Content

This update introduced a new system specifically designed to elevate content created for human audiences and demote content created primarily to attract search engine clicks.6 It targeted content perceived as unhelpful, lacking depth, providing little original value, or failing to deliver a satisfying user experience.28 A key aspect was the introduction of a site-wide signal: if a site hosted a significant amount of unhelpful content, its overall visibility could decrease, affecting even its high-quality pages.2 The update strongly emphasized signals aligned with E-E-A-T, particularly first-hand experience and demonstrated expertise.28 It prompted website owners to conduct content audits and remove or substantially improve low-value pages.98 In March 2024, the Helpful Content System was integrated into Google's core ranking systems, meaning its principles are now continuously applied.2 - SpamBrain (Ongoing, Enhanced for Link Spam Dec 2022): AI-Powered Spam Detection

While not a single update event, SpamBrain represents Google's primary AI system for fighting spam.2 It continuously learns and adapts to detect various spam tactics, including cloaking, hacked content, auto-generated content, and scraped content.146 In December 2022, Google announced a significant enhancement where SpamBrain was specifically leveraged to detect link spam, identifying both sites buying links and sites whose primary purpose is to sell or pass links.143 This AI-driven approach allows Google to neutralize the impact of unnatural links at scale and in real-time, reinforcing the principles established by the earlier Penguin updates.143 - Core Updates (Ongoing, Multiple Times Per Year): Broad System Refinements

Core updates are significant, broad adjustments to Google's overall ranking algorithm, typically announced by Google due to their potential for widespread impact.6 Unlike targeted updates (like Panda or Penguin initially), core updates don't focus on fixing a single issue but rather aim to improve how Google's systems assess content quality and relevance overall, ensuring they deliver on the mission to provide helpful and reliable results.78 Google often describes them as a reassessment of the web, akin to updating a list of top recommendations.78 These updates can cause noticeable ranking fluctuations for many sites, even those adhering to guidelines, simply because the relative assessment of content changes – some pages may be deemed more relevant or helpful under the refined criteria.78 Recovery from a negative impact typically involves focusing on improving overall content quality, helpfulness, and E-E-A-T, rather than fixing specific technical errors, and improvements may only be reflected after subsequent core updates.10 Recent core updates (e.g., March 2024, August 2024) have explicitly continued the push towards reducing low-quality, unoriginal, "search engine-first" content and better rewarding helpful, people-first content, often incorporating learnings from systems like the Helpful Content Update.10

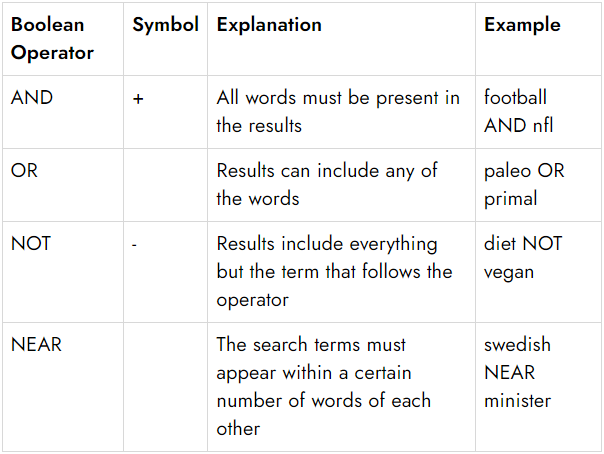

Table 1: Major Google Algorithm Updates

| Update Name | Year Introduced | Primary Purpose | Key Impact on Ranking/SEO |

| Panda | 2011 | Target low-quality/thin content, content farms, high ad-to-content ratio | Increased importance of content quality, originality, depth; penalized sites with poor content; now part of core algorithm |

| Penguin | 2012 | Combat manipulative link building (link schemes, paid links, anchor text spam) | Penalized/devalued spammy links; emphasized earning high-quality, relevant links; now real-time & core algorithm part |

| Hummingbird | 2013 | Improve understanding of conversational query meaning & intent (semantic search) | Shifted focus from keywords to meaning; improved handling of long-tail/voice queries; foundational for AI in search |

| RankBrain | 2015 | Use machine learning to interpret novel/ambiguous queries & user intent | Became a top ranking signal; improved relevance for complex queries; incorporated user signals; emphasized intent match |

| BERT | 2019 | Use NLP (bidirectional transformers) to understand query context & nuance | Significantly improved natural language understanding, especially prepositions/word order; impacted 10% of queries |

| Helpful Content | 2022 | Reward "people-first" content, demote "search engine-first" content | Introduced site-wide signal; emphasized E-E-A-T (esp. Experience); now integrated into core ranking systems |

| SpamBrain | Ongoing (Enhanced 2022 for links) | AI-based system to detect & neutralize various spam types (incl. link spam) | Improves spam detection at scale; neutralizes unnatural links; constantly updated |

| Core Updates | Ongoing | Broad improvements to overall ranking systems; reassess content quality/relevance | Can cause significant ranking shifts; reflect evolving understanding of helpfulness & E-E-A-T; require holistic quality improvements |

These updates illustrate a clear trajectory: from tackling basic manipulation to developing sophisticated AI capable of understanding language and intent, all in service of providing better, more reliable search results.

6. The Rise of AI: Machine Learning's Deepening Role

Artificial intelligence (AI) and machine learning (ML) have transitioned from being experimental components to becoming integral, driving forces behind Google's search algorithms.1 These technologies permeate various aspects of the search process, from understanding user queries to evaluating content and detecting spam.

- Query Understanding (RankBrain, Neural Matching, BERT, MUM): AI is fundamental to interpreting the complex and often ambiguous nature of human language in search queries.

- RankBrain: As previously discussed, this ML system excels at handling novel or ambiguous queries by converting language into mathematical vectors and identifying patterns to infer intent, even without prior exposure to the exact phrasing.39

- Neural Matching: This AI system focuses on understanding the underlying concepts within a query and matching them to concepts discussed on webpages, going beyond simple keyword or synonym matching.1 It leverages neural networks to grasp semantic relationships, enabling Google to surface relevant pages even if they don't share exact keywords with the query.94

- BERT: The BERT model uses its bidirectional Transformer architecture to understand the crucial role of context, word order, and prepositions in determining the precise meaning of a query.45 This allows for more accurate results for natural language and conversational searches.

- MUM (Multitask Unified Model): Representing a further evolution, MUM is designed to be multitask, multimodal, and multilingual.91 Built on the powerful T5 Transformer architecture 241, MUM can understand information across text, images (and potentially video/audio in the future) and transfer knowledge across 75+ languages.241 This allows it to tackle complex queries that require synthesizing information from diverse sources and formats.241

- Content Evaluation and Semantic Analysis: AI, particularly through NLP, plays a vital role in how Google assesses the content of webpages.

- Deep Understanding: Models like BERT and MUM utilize NLP techniques (such as entity recognition, sentiment analysis, text categorization, and understanding word dependencies) to analyze content far beyond keywords.5 They aim to understand the topics covered, the relationships between entities mentioned, the overall sentiment, and the likely purpose or function of the content.91

- Quality Signals: AI systems learn to identify patterns and signals within content that correlate with human judgments of quality, helpfulness, and E-E-A-T.96 This might involve assessing comprehensiveness, originality, clarity, and evidence of expertise.

- Semantic Relevance: AI enables ranking based on semantic similarity, using techniques like vector embeddings to represent the meaning of queries and documents mathematically and finding the closest matches, even if keywords differ.93

- Pattern Recognition and Signal Processing: At its heart, machine learning involves identifying patterns in data to make predictions.

- Learning from Data: Google's AI models are trained on massive datasets comprising web content, search queries, and user interaction data.40 They learn complex correlations between query features, content features, link features, and user satisfaction signals.

- User Behavior Analysis: Systems like RankBrain learn from aggregated and anonymized user interaction signals (like click-through rate, dwell time, bounce rate, pogo-sticking) to understand which results best satisfy users for particular types of queries.5 This feedback loop helps refine the algorithms.

- Continuous Adaptation: AI models are not static; they are continually updated and retrained based on new data, experimental results, and feedback from human quality raters, allowing the algorithm to adapt to the evolving web and user behavior.7

- AI in Spam Detection (SpamBrain): As highlighted previously, SpamBrain is Google's dedicated AI system for combating spam.2 Its machine learning capabilities allow it to identify complex spam patterns, including sophisticated link schemes, hacked content, and auto-generated abuse, often more effectively and at a greater scale than rule-based systems.144 It learns from reported spam and adapts to new manipulative tactics.2

The increasing integration of AI means that Google's understanding of language, intent, and content quality is becoming ever more sophisticated. This necessitates a shift in SEO towards creating genuinely helpful, high-quality content that satisfies user intent deeply, rather than relying on manipulating simpler signals.

7. The Ranking Symphony: How Diverse Elements Interact

Determining the final ranking order for a given search query is not a simple calculation based on a few dominant factors. Instead, it's a complex interplay where hundreds of signals across various categories—content, links, user experience, technical factors, intent understanding, and context—are dynamically evaluated and weighted by Google's sophisticated algorithms and AI systems.4

- Dynamic Weighting: A crucial aspect is that the importance or "weight" assigned to each ranking signal is not static. It varies significantly depending on the nature of the query, the user's intent, and their context.20

- Query Nature: For informational queries seeking factual answers ("capital of Australia"), signals related to authority and trustworthiness (E-A-T) might be heavily weighted.38 For navigational queries ("Twitter login"), signals related to brand recognition and the official site URL are paramount. For transactional queries ("buy running shoes"), signals related to product availability, site security (HTTPS), and potentially commercial reviews might be more influential.184 For news-related queries ("latest election results"), freshness is critical.2 For local queries ("pizza delivery"), proximity is a dominant factor.20

- YMYL Adjustment: Google explicitly states that for queries related to "Your Money or Your Life" (YMYL) topics, its algorithms give more weight to factors indicating authoritativeness, expertise, and trustworthiness (E-A-T).38

- AI Interpretation: Systems like RankBrain and BERT analyze the query to understand its underlying intent and nuances, which then influences which signals are prioritized in the ranking process.39

- Interdependence of Signals: Ranking factors rarely operate in isolation. They often influence or moderate each other.

- Content & Links: High-quality content naturally attracts high-quality backlinks, reinforcing authority.85 Conversely, strong backlinks pointing to low-quality content may have their value diminished by content quality signals or spam detection systems.49

- Content & UX: Excellent content can be undermined by poor page experience (slow speed, intrusive ads, not mobile-friendly), preventing users from accessing it easily and thus reducing its perceived helpfulness.27 Conversely, a technically perfect site with poor content won't satisfy user intent.79

- Technical SEO as Foundation: As established, technical factors like crawlability and indexability are prerequisites. If Google can't find or process a page, its content quality or links become irrelevant for ranking.21

- Intent & Context Modulate Everything: The interpretation of the user's intent and context acts as a lens through which all other signals are viewed and weighted.20 A page highly relevant to one interpretation of a query might be irrelevant to another interpretation triggered by a different user context.

- AI Systems as Conductors: AI models like RankBrain, Neural Matching, BERT, and MUM act like conductors in this symphony. They don't just represent individual signals but help interpret the query, understand the content semantically, learn from user interactions, and potentially adjust the weighting of various classical signals (like keywords, links, freshness) based on this deeper understanding.5 SpamBrain acts as a gatekeeper, removing or neutralizing signals deemed manipulative.143

- Holistic Assessment: Ultimately, Google aims for a holistic assessment. While individual factors are important, the goal is to reward pages and sites that consistently provide a high-quality, relevant, trustworthy, and satisfying experience overall.26 A site might not excel in every single metric but can still rank well if it strongly satisfies the user's core need for a particular query better than competitors.27 Google advises against "hyper-focusing" on individual metrics and encourages a holistic view of site quality and user experience.150

This complex, dynamic interaction means there is no simple checklist or fixed formula for achieving top rankings. Success requires a comprehensive strategy that addresses content quality, technical soundness, link authority, and user experience, all while staying attuned to the likely intent and context of the target audience.

8. The Horizon: Future Directions and Ongoing Discussions

The landscape of Google Search is perpetually evolving, driven by advancements in technology, shifts in user behavior, and Google's continuous efforts to refine its algorithms. Understanding current discussions and potential future trajectories is crucial for anyone relying on search visibility.

- The Ascendancy of AI and Generative Search:

- AI Overviews (Formerly SGE): The most significant recent development is the integration of generative AI directly into the SERP through AI Overviews.42 These AI-generated summaries aim to provide direct answers to queries, synthesizing information from multiple web sources.47 While initially appearing on a high percentage of queries during testing (up to 84%) 255, the rollout has seen volatility, with presence dropping significantly (reportedly below 15% overall at one point, though spiking in certain categories like problem-solving or during core updates).46

- Impact on Traffic and CTR: A major concern within the SEO industry and among publishers is the potential for AI Overviews to reduce click-through rates (CTR) to traditional organic listings, as users may get their answers directly on the SERP (zero-click searches).183 Recent studies suggest significant CTR drops for top organic positions when AI Overviews are present, particularly for non-branded, informational queries.261 While Google claims AI Overviews can lead to higher-quality clicks and increased search usage 255, many publishers remain skeptical and report negligible or negative traffic impacts so far.263

- Optimizing for AI Overviews ("GEO"): Strategies are emerging around "Generative Engine Optimization" (GEO).116 This involves structuring content clearly (Q&A format, step-by-step instructions), building topical authority, ensuring E-E-A-T signals are strong, and potentially using schema markup.47 Interestingly, recent data suggests a high overlap (up to 99%) between sources cited in AI Overviews and pages ranking in the top 10 organic results, implying that strong traditional SEO foundations are key.266 However, the overlap dropped slightly after a recent core update, suggesting pages outside the top 10 might have increased chances.262

- Future AI Capabilities (MUM): Technologies like MUM promise even more sophisticated understanding across languages and modalities (text, image, video, audio), potentially enabling Google to answer highly complex, multi-step queries by synthesizing information from diverse global sources.140 This further points towards a future where Google acts more as an answer engine than a list of links.

- Evolving User Behavior: User search habits are changing, impacting how algorithms are tuned.

- Mobile, Voice, and Visual Search: Mobile search dominance continues 67, and voice search queries (often longer and conversational) are increasing with the adoption of smart speakers and assistants.44 Visual search (using images to search via tools like Google Lens) is also growing rapidly.165 Algorithms must adapt to understand and rank content effectively for these non-textual and conversational query types.68

- Search Diversification: Users are increasingly turning to platforms beyond traditional search engines (like TikTok, YouTube, Reddit, Amazon) for discovery and information, especially younger demographics.117 This necessitates a broader "search everywhere optimization" approach for brands.258

- Zero-Click Searches: Features like Featured Snippets, Knowledge Panels, and now AI Overviews mean users often find answers directly on the SERP without clicking through to a website.165 This trend challenges traditional traffic-based SEO metrics and emphasizes the importance of brand visibility and appearing within these SERP features.117

- Continued Emphasis on Content Quality and E-E-A-T: Despite technological shifts, Google consistently reiterates the importance of high-quality, reliable, people-first content demonstrating Experience, Expertise, Authoritativeness, and Trustworthiness.6 As AI makes content generation easier, demonstrating genuine human experience and verifiable expertise becomes an even stronger differentiator.12 Core updates continue to refine the assessment of helpfulness and penalize low-value, unoriginal content.160

- Privacy Considerations: With increasing regulatory scrutiny and the phase-out of third-party cookies, Google is navigating a path towards more privacy-preserving technologies (like the Privacy Sandbox) while still enabling personalization and advertising.22 SEO strategies may need to adapt to rely more on first-party data and contextual relevance.164

- Criticisms and Challenges: Google's algorithm is not without criticism. Concerns persist regarding:

- Bias: Algorithms can inadvertently perpetuate or amplify societal biases present in their training data or design choices, potentially leading to discriminatory outcomes in areas like news visibility, job listings, or product recommendations.270 Studies have shown biases favoring popular outlets or certain political leanings in news results.270

- Transparency: The complexity and proprietary nature of the algorithms lead to a lack of transparency (the "black box" problem), making it difficult for researchers, regulators, and even users to fully understand why certain results are shown or how decisions are made.271 This hinders accountability and the ability to identify or rectify biases.272

- Market Power: Google's dominance in search raises antitrust concerns about whether it manipulates results to favor its own products or disadvantage competitors (e.g., vertical search engines or information providers).275 The impact of features like AI Overviews on publisher traffic fuels these concerns.261

- Content Quality Decline: Some critics and studies argue that despite Google's efforts, the quality of search results has declined, with spammy or low-value content still ranking prominently, potentially exacerbated by AI-generated content.160

- Expert Predictions & SEO Adaptation: Industry experts predict a continued shift towards intent-based optimization, topical authority building, E-E-A-T demonstration, cross-platform visibility (OmniSEO), and adapting to AI-driven features like AI Overviews.115 The focus remains on providing genuine value to users, leveraging AI as a tool rather than a replacement for human expertise, and diversifying traffic sources beyond traditional Google organic search.117 Technical excellence, particularly around Core Web Vitals and mobile-friendliness, remains foundational.116

9. Conclusion: Navigating the Labyrinth

Google's search algorithm is a testament to the power and complexity of modern information retrieval systems. Driven by the mission to organize the world's information and make it useful, it has evolved from the elegant simplicity of PageRank into a dynamic ecosystem of interconnected algorithms and sophisticated AI models. Understanding this system requires appreciating its core processes—crawling, indexing, and ranking—and the diverse signals it evaluates: the relevance, quality, freshness, and E-E-A-T of content; the authority conveyed by backlinks; the seamlessness of the user experience; and the integrity of the technical foundation.

Crucially, the algorithm is not static. Its constant updates, from minor tweaks to major core revisions and the integration of transformative AI like RankBrain, BERT, and MUM, reflect an ongoing effort to better understand user intent and the nuances of human language. The weighting of ranking factors is fluid, adapting dynamically to the context of each unique search query, user location, history, and device. This contextual personalization aims to deliver the most relevant results for each individual user in their specific moment of need.

The rise of generative AI, exemplified by AI Overviews, presents both opportunities and significant challenges. While offering users potentially faster answers, it raises valid concerns for publishers regarding traffic and visibility, demanding new strategies focused on becoming cited sources and building authority beyond traditional blue links. Simultaneously, evolving user behaviors, including the growth of mobile, voice, and visual search, and the diversification of information discovery across platforms like social media, necessitate a broader, more adaptive approach to online visibility.

Despite the increasing role of AI and automation, the enduring principles for success in Google Search remain anchored in quality and user-centricity. Creating helpful, reliable, people-first content that demonstrates genuine experience, expertise, authoritativeness, and trustworthiness (E-E-A-T) is paramount. This must be supported by a technically sound, fast, secure, and mobile-friendly website that offers a positive user experience. While the specific tactics may evolve with each algorithm update, a fundamental commitment to providing value and satisfying user intent remains the most resilient strategy for navigating the complex and ever-changing labyrinth of Google Search. Ongoing vigilance, adaptation, and a focus on the end-user are essential for sustained visibility in this dynamic digital ecosystem.

Works cited

- Your Guide to Google Ranking Systems | Paul Teitelman SEO ..., accessed on April 23, 2025, https://www.paulteitelman.com/your-guide-to-google-ranking-systems/

- A Guide to Google Search Ranking Systems - Google for Developers, accessed on April 23, 2025, https://developers.google.com/search/docs/appearance/ranking-systems-guide

- Google Search Algorithm Leak: Internal Docs Reveal Secrets of Ranking, Clicks, and More, accessed on April 23, 2025, https://web.swipeinsight.app/posts/google-search-algorithm-leak-internal-docs-reveal-secrets-of-ranking-clicks-and-more-6537

- How Google Search Algorithm Works - 601MEDIA, accessed on April 23, 2025, https://www.601media.com/how-google-search-algorithm-works/

- All you need to know about Google search algorithm, accessed on April 23, 2025, https://www.goigo.agency/en/blog/all-you-need-to-know-about-google-search-algorithm

- Understanding the Core and Latest Google Algorithm Updates - SKILLFLOOR, accessed on April 23, 2025, https://skillfloor.com/blog/google-algorithm-updates

- Search Quality Rater Guidelines: An Overview - Google Services, accessed on April 23, 2025, https://services.google.com/fh/files/misc/hsw-sqrg.pdf

- Google Algorithm Updates & Changes: A Complete History - Search Engine Journal, accessed on April 23, 2025, https://www.searchenginejournal.com/google-algorithm-history/

- Google algorithm updates: The complete history - Search Engine Land, accessed on April 23, 2025, https://searchengineland.com/library/platforms/google/google-algorithm-updates

- Google Algorithm Updates: History & Latest Changes - ROI Revolution, accessed on April 23, 2025, https://roirevolution.com/blog/google-algorithm-updates-history-latest-changes/

- Google's Ranking Factors For SEO: A Complete Guide (2025) - ASL BPO, accessed on April 23, 2025, https://www.aslpreservationsolutions.com/google-ranking-factors-for-seo

- Google Latest Algorithm Updates Explained: How to Future-Proof Your Site - ProfileTree, accessed on April 23, 2025, https://profiletree.com/google-latest-algorithm-updates/

- The Impact of User Behavior on Google Search Metrics - seobase, accessed on April 23, 2025, https://seobase.com/how-user-behavior-affects-seo

- Our Approach - How Google Search Works, accessed on April 23, 2025, https://www.google.com/intl/en_us/search/howsearchworks/our-approach/

- What Is Google Search And How Does It Work, accessed on April 23, 2025, https://www.google.com/intl/en_us/search/howsearchworks/

- Google's (Alphabet's) Mission Statement & Vision Statement: An Analysis, accessed on April 23, 2025, https://panmore.com/google-vision-statement-mission-statement

- 35 Vision And Mission Statement Examples That Will Inspire Your Buyers - HubSpot Blog, accessed on April 23, 2025, https://blog.hubspot.com/marketing/inspiring-company-mission-statements

- Google Mission and Vision Statement Analysis - EdrawMind, accessed on April 23, 2025, https://www.edrawmind.com/article/google-mission-and-vision-statement-analysis.html

- Google Search Console Help, accessed on April 23, 2025, https://support.google.com/webmasters/thread/123822734/google-search-console-help?hl=en

- How Does Google Determine Ranking Results - Google Search, accessed on April 23, 2025, https://www.google.com/intl/en_us/search/howsearchworks/how-search-works/ranking-results/

- In-Depth Guide to How Google Search Works, accessed on April 23, 2025, https://developers.google.com/search/docs/fundamentals/how-search-works

- Ad Controls and Personalization Settings - Google Safety Center, accessed on April 23, 2025, https://safety.google/privacy/ads-and-data/

- Data Privacy Settings, Controls & Tools - Google Safety Center, accessed on April 23, 2025, https://safety.google/privacy/privacy-controls/

- Organizing Information - How Google Search Works, accessed on April 23, 2025, https://www.google.com/intl/en_us/search/howsearchworks/how-search-works/organizing-information/

- Google's Index Size Revealed: 400 Billion Docs (& Changing) - Zyppy SEO, accessed on April 23, 2025, https://zyppy.com/seo/google-index-size/

- Understanding Google Page Experience | Google Search Central | Documentation, accessed on April 23, 2025, https://developers.google.com/search/docs/appearance/page-experience

- Evaluating page experience for a better web | Google Search Central Blog, accessed on April 23, 2025, https://developers.google.com/search/blog/2020/05/evaluating-page-experience

- What creators should know about Google's August 2022 helpful content update, accessed on April 23, 2025, https://developers.google.com/search/blog/2022/08/helpful-content-update

- Timeline of Google Search - Wikipedia, accessed on April 23, 2025, https://en.wikipedia.org/wiki/Timeline_of_Google_Search

- The Algorithm That Made Google Google | Towards Data Science, accessed on April 23, 2025, https://towardsdatascience.com/the-algorithm-that-made-google-google-3bbc2cfa8815/

- PageRank - Wikipedia, accessed on April 23, 2025, https://en.wikipedia.org/wiki/PageRank

- The Google PageRank Algorithm, accessed on April 23, 2025, https://web.stanford.edu/class/cs54n/handouts/24-GooglePageRankAlgorithm.pdf

- ELI5:I have a fundamental understanding of what a mathematical algorithm is, but I wonder: Can anyone can explain how Google's PageRank algorithm works? - Reddit, accessed on April 23, 2025, https://www.reddit.com/r/explainlikeimfive/comments/4kusnv/eli5i_have_a_fundamental_understanding_of_what_a/

- Google's PageRank Algorithm: Explained and Tested, accessed on April 23, 2025, https://www.link-assistant.com/news/google-pagerank-algorithm.html

- Backlinks: A Beginner's Guide to Link-Building - Mangools, accessed on April 23, 2025, https://mangools.com/blog/backlinks/

- Beyond PageRank: Graduating to actionable metrics | Google Search Central Blog, accessed on April 23, 2025, https://developers.google.com/search/blog/2011/06/beyond-pagerank-graduating-to

- SEO Ranking Factors in 2025: Top, Verified, & More - SEO.com, accessed on April 23, 2025, https://www.seo.com/basics/how-search-engines-work/ranking-factors/

- Google Algorithms Detect & Adjust Rankings For YMYL Queries, accessed on April 23, 2025, https://www.seroundtable.com/google-algorithms-detect-adjust-ranking-ymyl-queries-27138.html

- FAQ: All about the Google RankBrain algorithm - Search Engine Land, accessed on April 23, 2025, https://searchengineland.com/faq-all-about-the-new-google-rankbrain-algorithm-234440

- Google RankBrain and its Relevance to SEO - Multi-Channel Digital Marketing, accessed on April 23, 2025, https://cjgdigitalmarketing.com/google-rankbrain-and-its-relevance-to-seo/

- Google RankBrain Algorithm: A Deep Dive into SEO Evolution - MAK Digital Design, accessed on April 23, 2025, https://makdigitaldesign.com/search-engine-optimization/google-rankbrain-seo-evolution/

- Google Search Algorithm Updates Timeline | Americaneagle.com, accessed on April 23, 2025, https://www.americaneagle.com/insights/blog/post/google-algorithm-updates-timeline

- Google Algorithm Update & Change History - 2015-2024 Timeline, accessed on April 23, 2025, https://www.goinflow.com/blog/google-algorithm-update-history-timeline/

- Google Hummingbird Update - Optimization & Recovery 2022 Guide - Click Intelligence, accessed on April 23, 2025, https://www.clickintelligence.co.uk/guides/google-update-history/hummingbird-algorithm-update-guide/

- Google BERT Update - What it Means - Search Engine Journal, accessed on April 23, 2025, https://www.searchenginejournal.com/google-bert-update/332161/

- Google AI Overviews Found In 74% Of Problem-Solving Queries - Search Engine Journal, accessed on April 23, 2025, https://www.searchenginejournal.com/google-ai-overviews-found-in-74-of-problem-solving-queries/538504/

- Google AI Overviews: Everything you need to know - Search Engine Land, accessed on April 23, 2025, https://searchengineland.com/google-ai-overviews-everything-you-need-to-know-449399

- Google Panda - Wikipedia, accessed on April 23, 2025, https://en.wikipedia.org/wiki/Google_Panda

- Google Panda algorithm update guide: Everything you need to know - Search Engine Land, accessed on April 23, 2025, https://searchengineland.com/google-panda-update-guide-381104

- What Is Google Penguin? How To Recover From Google Updates - Moz, accessed on April 23, 2025, https://moz.com/learn/seo/google-penguin

- Lookback: Google launched the Penguin algorithm update 11 years ago, accessed on April 23, 2025, https://searchengineland.com/google-penguin-algorithm-update-395910

- How Google's BERT Changed Natural Language Understanding | Brave River Solutions, accessed on April 23, 2025, https://www.braveriver.com/blog/how-googles-bert-changed-natural-language-understanding/

- How Search Engines Crawl, Index and Rank - Stan Ventures, accessed on April 23, 2025, https://www.stanventures.com/blog/crawling-indexing-ranking/

- How Google Search Works: Crawling - Indexing - Serving | Maze Solutions, accessed on April 23, 2025, https://www.wearemaze.com/fr/how-google-search-works-crawling-indexing-serving/

- Crawling December: The how and why of Googlebot crawling | Google Search Central Blog, accessed on April 23, 2025, https://developers.google.com/search/blog/2024/12/crawling-december-resources

- Google Explains Googlebot's Crawling Process in Latest Search Central Update, accessed on April 23, 2025, https://web.swipeinsight.app/posts/how-and-why-googlebot-crawls-in-december-13126

- Google Releases New 'How Search Works' Episode On Crawling, accessed on April 23, 2025, https://www.searchenginejournal.com/google-releases-new-how-search-works-episode-on-crawling/509552/

- What Is Googlebot | Google Search Central | Documentation, accessed on April 23, 2025, https://developers.google.com/search/docs/crawling-indexing/googlebot

- SEO Starter Guide: The Basics | Google Search Central | Documentation, accessed on April 23, 2025, https://developers.google.com/search/docs/fundamentals/seo-starter-guide

- Technical SEO Techniques and Strategies | Google Search Central | Documentation, accessed on April 23, 2025, https://developers.google.com/search/docs/fundamentals/get-started

- Top 7 Google Ranking Factors That Actually Impact Your SEO, accessed on April 23, 2025, https://outreachmonks.com/google-ranking-factors/

- Top 15 Most Important Google SEO Ranking Factors in 2024 - ResultFirst, accessed on April 23, 2025, https://www.resultfirst.com/blog/seo-basics/google-seo-ranking-factors/

- Why Is User Experience (UX) Design Important in SEO? - Wildcat Digital, accessed on April 23, 2025, https://wildcatdigital.co.uk/blog/why-is-user-experience-ux-design-important-in-seo/

- Google's Page Experience Update: What you Need to Know - IDX, accessed on April 23, 2025, https://www.idx.inc/blog/performance-marketing/googles-page-experience-update-what-you-need-know

- The Google December 2024 Core Update: What You Need to Know - SamBlogs, accessed on April 23, 2025, https://samblogs.com/google-december-2024-core-update/

- Google's Page Experience Update: How Will It Impact SEO? | NO BS Marketplace, accessed on April 23, 2025, https://nobsmarketplace.com/blog/googles-page-experience-update-how-will-it-impact-seo/

- 10 Steps to Recover from a Google SEO Algorithm Update - Nextiny Marketing & Sales Blog, accessed on April 23, 2025, https://blog.nextinymarketing.com/steps-to-recover-from-a-google-seo-algorithm-update

- SEO Basics: Understanding Google Algorithm Changes | ProfileTree, accessed on April 23, 2025, https://profiletree.com/understanding-google-algorithm-changes/

- Google Crawling and Indexing | Google Search Central | Documentation, accessed on April 23, 2025, https://developers.google.com/search/docs/crawling-indexing

- Technical SEO Checklist - The Roadmap to a Complete Technical SEO Audit - cognitiveSEO, accessed on April 23, 2025, https://cognitiveseo.com/blog/17963/technical-seo-checklist/

- Google details comprehensive web crawling process in new technical document, accessed on April 23, 2025, https://ppc.land/google-details-comprehensive-web-crawling-process-in-new-technical-document/

- Technical SEO Checklist: 10 Key Steps (+5 Expert Tips!), accessed on April 23, 2025, https://www.youngurbanproject.com/technical-seo-checklist/

- Key SEO Considerations for a New Website [with Checklist] - Darren McManus, accessed on April 23, 2025, https://www.seobydarren.com/blog/seo-considerations-new-website/

- Manage search indexes | BigQuery - Google Cloud, accessed on April 23, 2025, https://cloud.google.com/bigquery/docs/search-index

- How the Google Search Algorithm Works: A Zero-Fluff Guide - Semrush, accessed on April 23, 2025, https://www.semrush.com/blog/google-search-algorithm/

- A complete guide on how search engine algorithms work - Excite Media, accessed on April 23, 2025, https://www.excitemedia.com.au/how-search-engine-algorithms-work/

- Google search ranking systems: all active algorithms in Search - SEOZoom, accessed on April 23, 2025, https://www.seozoom.com/what-google-search-ranking-systems-are-and-how-they-work/

- Google Search's Core Updates | Google Search Central | What's new - Google for Developers, accessed on April 23, 2025, https://developers.google.com/search/updates/core-updates

- 7 Essential Google Ranking Factors for Attorney Websites in 2025 - Grow Law Firm, accessed on April 23, 2025, https://growlawfirm.com/seo-for-lawyers/google-ranking-factors

- Google's 200 Ranking Factors: The Complete List (2025) - Backlinko, accessed on April 23, 2025, https://backlinko.com/google-ranking-factors

- The 2025 Google Algorithm Ranking Factors - First Page Sage, accessed on April 23, 2025, https://firstpagesage.com/seo-blog/the-google-algorithm-ranking-factors/

- Search Engine Testing & Evaluation - Google, accessed on April 23, 2025, https://www.google.com/intl/en_us/search/howsearchworks/how-search-works/rigorous-testing/

- SEO Ranking Factors: The Complete List + Best Way to Use, accessed on April 23, 2025, https://techhelp.ca/seo-ranking-factors/

- Guide to Top 15 Google Ranking Factors To Optimize For Best SEO Results | General Data, accessed on April 23, 2025, https://www.gdata.in/blog/seo/guide-to-top-15-google-ranking-factors-to-optimize-for-best-seo-results

- Google's Known Ranking Factors: What to Know - HubSpot Blog, accessed on April 23, 2025, https://blog.hubspot.com/marketing/google-ranking-algorithm-infographic

- How Google Processes Queries and Ranks Content - Eight Oh Two, accessed on April 23, 2025, https://eightohtwo.com/blog/how-google-processes-queries-and-ranks-content/

- Learn What Relevant Means For Natural Language Processing and SEO - BrightEdge, accessed on April 23, 2025, https://www.brightedge.com/blog/natural-language-processing

- Google's Hummingbird Update: How It Changed Search, accessed on April 23, 2025, https://www.searchenginejournal.com/google-algorithm-history/hummingbird-update/

- Query Understanding In NLP Simplified & How It Works [5 Techniques] - Spot Intelligence, accessed on April 23, 2025, https://spotintelligence.com/2024/04/03/query-understanding/